When asking an LLM chatbot for advice in how to collaborate or negoitate with people, it might be a good idea to ask it to explicitly list what it thinks everyone's motivations and incentives are, given the situation you describe to it. That way, if you see a key motivation it misses, you can tell it that, and you'll know it will take that into account.

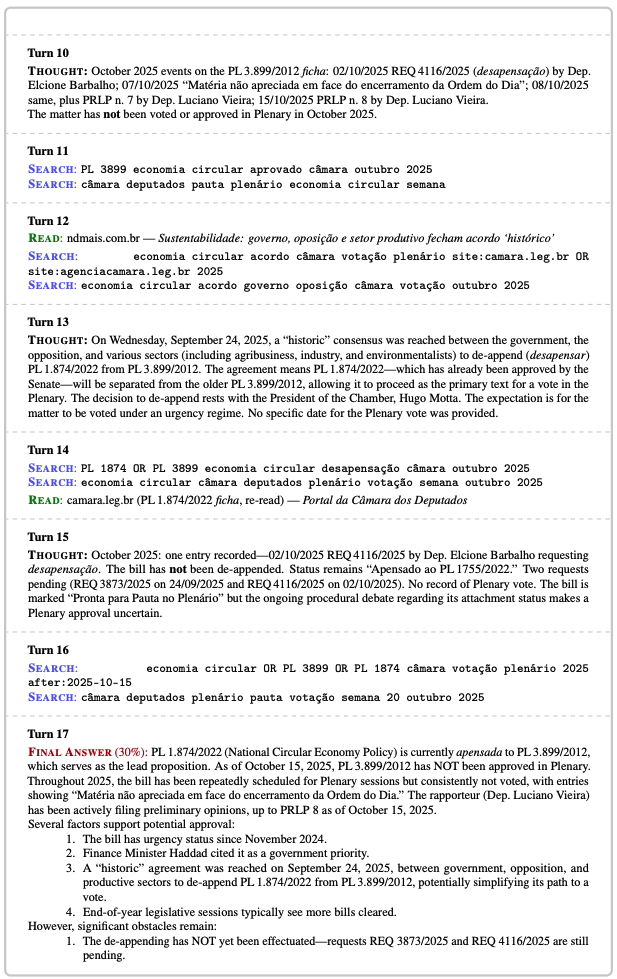

If you don't, there is a chance the LLM or agent will miss motivations that are not hard for a human to spot. Here's an example from our forecasting evals, a total miss from Claude Opus 4.6 on primary motivation the main actor faced. (I looked into this case also in run agents twice.) The question was: "Will Brazil's Câmara Plenary approve the circular economy bill between October 15 and December 31, 2025?" Claude's quick research, from the perspective of Oct 15, showed the bill had been repeatedly scheduled and not voted on for over a year. A Claude Opus 4.6 forecasting agent in the BTF-2 benchmark gave a 30% chance it would happen by Dec 31.

In fact, the bill passed on October 29, two two weeks later. The reason was that Brazil was hosting COP30, the UN climate summit, on November 10. The Lula government could not arrive at its own showcase summit without shipping its flagship environmental legislation. But the word "COP30" did not appear in any of Opuss's seventeen search queries, in any page it read, or in any intermediate reasoning block.

The agent asked "what is the status of this bill?" repeatedly. It didn't think to ask: "What is going on that might make this very much in the govnerment's interests all of a sudden?" (A better forecaster did think of this, so we know this was something that could be anticipated without hindsight.) You can see a subset of what it did search and read to see how thorough it was, while still missing the critical motivation:

This is one of two dominant strategic-reasoning failures we found in our paper auditing 130 of the Opus 4.6 agent's worst scoring forecasts. The companion failure, reading rhetoric as commitment, is about taking what politicians say too literally. This one is the opposite: missing what they don't say. The key incentive a politician faces is sometimes not stated out loud, but humans can infer them pretty reliably.

Two more examples of this failure mode from Opus 4.6:

"Will FY2025 Safe Streets for All grants be announced by year-end?" Opus saw advocacy reports that the Trump-era DOT was processing safety grants at roughly 10% of Biden-era speed and gave 25%, treating slow processing as evidence of inability. The actual motive was the opposite. A Trump-aligned DOT had every reason to delay through the year, accumulate newsworthy mass, rebrand the program, and drop a single Trump-branded announcement at year-end. USDOT announced $982 million to 521 communities on December 23 under the headline "Trump's Transportation Secretary Invests $1 Billion into Building Big, Beautiful Infrastructure."

"Will DOJ file a pre-enforcement challenge to California's SB 627 by December 31?" California had passed a law making it a misdemeanor for federal officers to wear masks during California operations, effective January 1, 2026. Opus gave 25%, weighted heavily by the possibility that the federal government would simply "ignore and defend": instruct agents to keep wearing masks and assert immunity in court. But "ignore and defend" leaves every individual federal agent exposed to misdemeanor prosecution starting January 1. When a state criminal statute directly targets federal officers. The January 1 effective date was a clock, not a reason to wait.

While we detect this in forecasting, and the agents are in general quite perceptive on political matter, we detected this failure often enough that it makes me wary about trusting Opus 4.6, or other frontier agents, when I'm asking it for advice that requires modeling what other people are trying to accomplish and why.

One suggestion to avoid this is: ask the AI to list what it thinks people are trying to accomplish. If you spot something it doesn't, you can tell it, so it can take it into account.

Also see: AI takes people at their word (the companion post on reading rhetoric as commitment), Opus does better research, Gemini has better judgment, run agents twice, agents sometimes catastrophize, the BTF-2 dataset on Hugging Face, and the BTF-2 dataset release.