AI 2027 Project | New York Times Coverage

Note (October 2, 2025): This article is being preserved as originally published, as a record of what FutureSearch predicted about AI timelines and disagreements with the AI Futures team.

Key Takeaways

- Superhuman coders arriving soon: FutureSearch places significant probability on mass deployment of superhuman coders at top frontier labs, though less than the AI Futures team

- **100B by mid-2027, though bottlenecks remain

- R&D automation faces real-world constraints: Experiments taking weeks or months create bottlenecks that may slow progress more than anticipated

- Commercial incentives may dominate: Leading labs like OpenAI currently prioritize consumer revenue over transformative AI development

- Government intervention likely: Longer timelines create more opportunities for regulatory intervention before AI becomes dangerously capable

FutureSearch's Contributions to the AI 2027 Forecast

Today, AI 2027, by AI-Futures.org, released their scenario and forecasts (New York Times). It is an incredibly provocative story, and is scarily plausible according to serious experts with great track records.

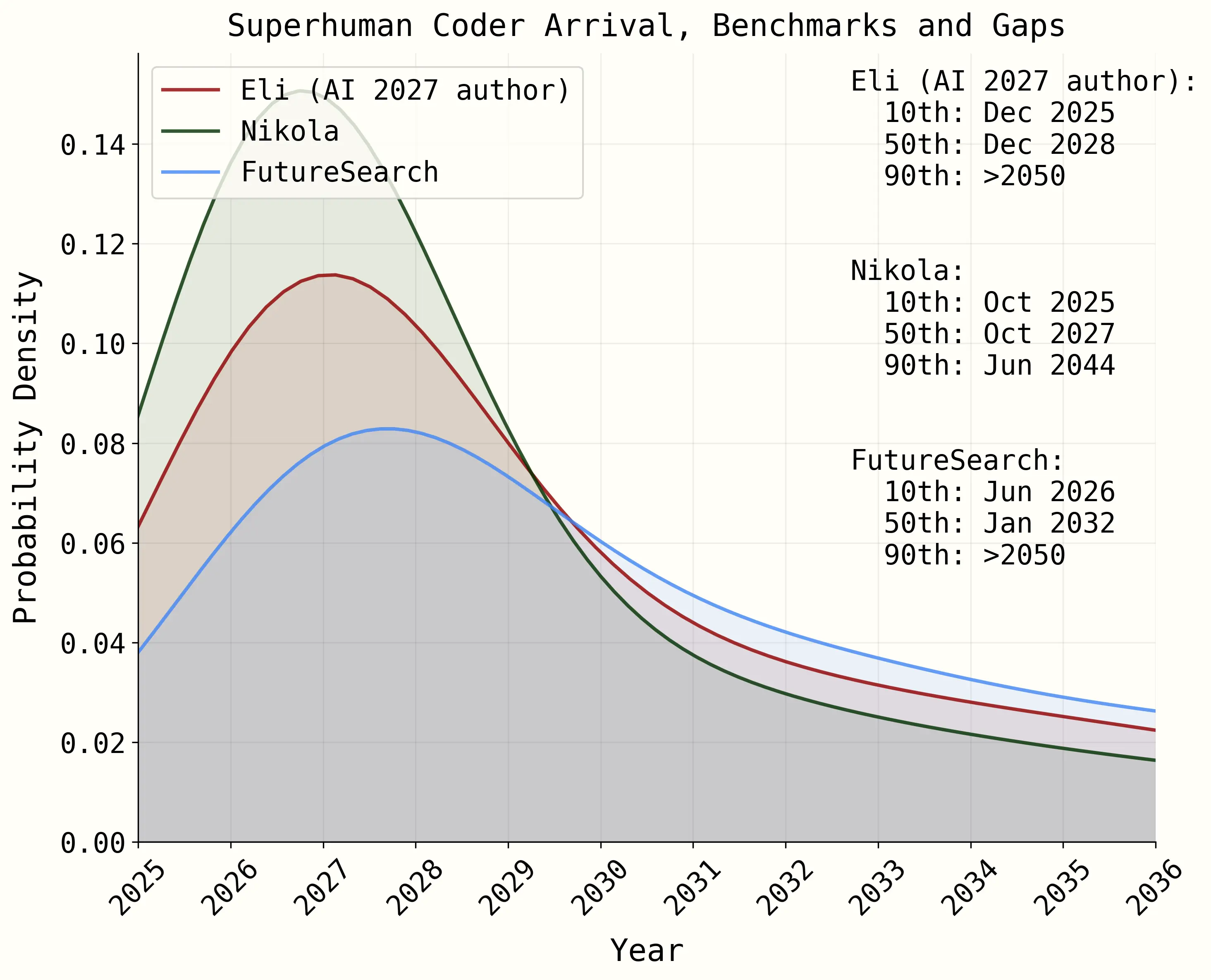

FutureSearch co-authored the Timeline Forecast, explaining when we expect the arrival of the first key piece in the story: superhuman coders that are deployed in mass inside the top frontier lab. (We put significant probability on "soon", though not as much as the AI Futures team.)

We're grateful that this, and other core research and forecasts of ours, were included in the project.

We also contributed, and shared our disagreements, in three other key forecasts:

-

Could the leading frontier lab (likely OpenAI) reach $100B in revenue by mid-2027? In their Compute Forecast, they speculate it will, in order to fund the unbelievable costs to train and run their army of automated software engineers and researchers. We lay out the most likely way that this could happen.

-

Once the frontier lab has great automated software engineers, we weighed in how long it will take for them to develop great automated AI researchers.

-

Once the frontier lab has automated AI researchers, we contributed forecasts on how long until they create an automated AI researcher that's substantially better than any human.

These scenarios are quite speculative. We encourage you to read their and our forecasts and judge for yourself whether they are credible. Overall, we forecast that these developments, even if they play out the way they describe in the piece, will come much later than they predict.

Here, as contributors to the core forecasts, we'd like to share the major points of disagreement FutureSearch had, not only with the excellent thinkers at the AI-Futures org, but amongst ourselves.

R&D Automation Faces Real-World Bottlenecks

AI research is quite different from other capabilities AI has conquered, from chess to poetry. Real world experiments take weeks, months, or years to get results and learn what to do next. We model this as a significant bottleneck on R&D progress.

Human programmers and researchers need a vast amount of context about the real world, and their organization and coworkers (human or AI) to inform complexity trade-offs. While AI 2027 does take this into account, we think they could be underestimating how much this will slow down progress.

Commercial Success May Trump the Race to AGI

So far OpenAI, the leading contender to be the company in the AI 2027 story, has spoken more about consumer revenue growth and less about transformative AI.

This piece requires at least one frontier lab to dedicate the majority of their resources towards building AI for their own internal use. We have reason to doubt that many of them will.

For a detailed analysis of OpenAI's revenue trajectory, see our comprehensive revenue forecast.

Government Intervention Could Reshape the Timeline

In AI 2027, the US government gets involved in a significant way once AI is already incredibly capable and dangerous. If the process takes longer than AI 2027 projects, as we predict it will, there will be significantly more time for the US government to intervene.

Even actions like significant tariffs against semiconductor suppliers abroad, as were enacted the day before AI 2027 went live, suggests that governments have a much larger role to play.

The AI 2027 team decided not to consider this for the sake of the narrative, but it might turn out to be the single most important consideration on how things play out.

About the Forecasters

Tom Liptay - Director of Forecasting at FutureSearch, PhD from MIT in AI and cognitive science, former researcher at MIT's Brain and Cognitive Sciences department.

Finn Hambly - Forecaster with background in theoretical physics and AI alignment research.

Sergio Abriola - Math PhD and top Metaculus forecaster with track record in technical forecasting.

Tolga Bilge - Superforecaster and policy researcher specializing in AI governance.

Related Reading

- The Bull Case for OpenAI

- Real-world evals of OpenAI's o1, the first "thinking" model

- OpenAI's financials: a Case Study of claims vs. reality

Press Coverage

- This AI Forecast Predicts Storms Ahead (New York Times, April 3, 2025)